Last Update 5 days ago Total Questions : 65

The Cloud Associate (JNCIA-Cloud) content is now fully updated, with all current exam questions added 5 days ago. Deciding to include JN0-214 practice exam questions in your study plan goes far beyond basic test preparation.

You'll find that our JN0-214 exam questions frequently feature detailed scenarios and practical problem-solving exercises that directly mirror industry challenges. Engaging with these JN0-214 sample sets allows you to effectively manage your time and pace yourself, giving you the ability to finish any Cloud Associate (JNCIA-Cloud) practice test comfortably within the allotted time.

Which statement about software-defined networking is true?

What are the two characteristics of the Network Functions Virtualization (NFV) framework? (Choose two.)

Which component of a software-defined networking (SDN) controller defines where data packets are forwarded by a network device?

When considering OpenShift and Kubernetes, what are two unique resources of OpenShift? (Choose two.)

Which two statements are correct about Kubernetes resources? (Choose two.)

Which two tools are used to deploy a Kubernetes environment for testing and development purposes? (Choose two.)

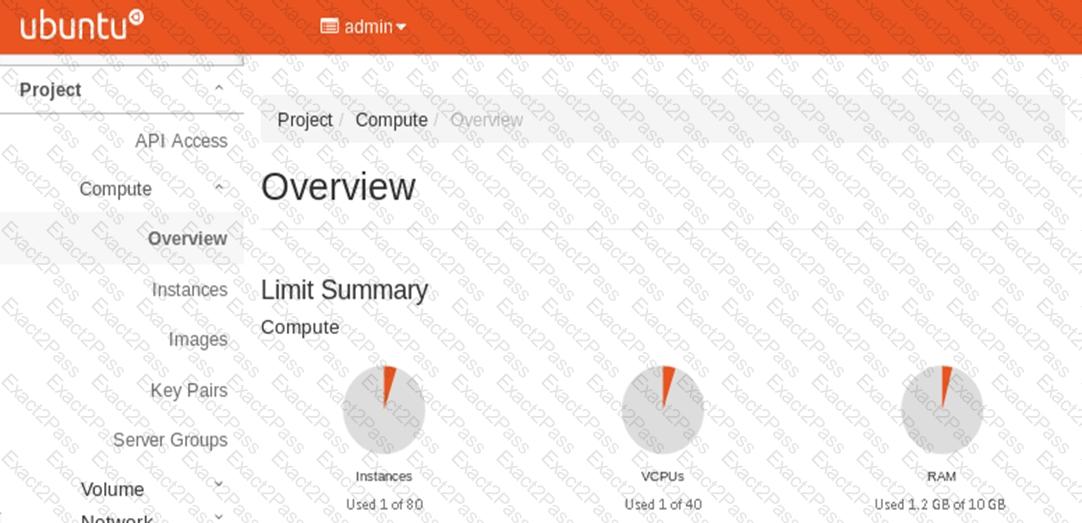

Click to the Exhibit button.

Referring to the exhibit, which OpenStack service provides the UI shown in the exhibit?